Instructions to use OpenLemur/lemur-70b-v1 with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Transformers

How to use OpenLemur/lemur-70b-v1 with Transformers:

# Use a pipeline as a high-level helper from transformers import pipeline pipe = pipeline("text-generation", model="OpenLemur/lemur-70b-v1")# Load model directly from transformers import AutoTokenizer, AutoModelForCausalLM tokenizer = AutoTokenizer.from_pretrained("OpenLemur/lemur-70b-v1") model = AutoModelForCausalLM.from_pretrained("OpenLemur/lemur-70b-v1") - Notebooks

- Google Colab

- Kaggle

- Local Apps

- vLLM

How to use OpenLemur/lemur-70b-v1 with vLLM:

Install from pip and serve model

# Install vLLM from pip: pip install vllm # Start the vLLM server: vllm serve "OpenLemur/lemur-70b-v1" # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:8000/v1/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "OpenLemur/lemur-70b-v1", "prompt": "Once upon a time,", "max_tokens": 512, "temperature": 0.5 }'Use Docker

docker model run hf.co/OpenLemur/lemur-70b-v1

- SGLang

How to use OpenLemur/lemur-70b-v1 with SGLang:

Install from pip and serve model

# Install SGLang from pip: pip install sglang # Start the SGLang server: python3 -m sglang.launch_server \ --model-path "OpenLemur/lemur-70b-v1" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "OpenLemur/lemur-70b-v1", "prompt": "Once upon a time,", "max_tokens": 512, "temperature": 0.5 }'Use Docker images

docker run --gpus all \ --shm-size 32g \ -p 30000:30000 \ -v ~/.cache/huggingface:/root/.cache/huggingface \ --env "HF_TOKEN=<secret>" \ --ipc=host \ lmsysorg/sglang:latest \ python3 -m sglang.launch_server \ --model-path "OpenLemur/lemur-70b-v1" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "OpenLemur/lemur-70b-v1", "prompt": "Once upon a time,", "max_tokens": 512, "temperature": 0.5 }' - Docker Model Runner

How to use OpenLemur/lemur-70b-v1 with Docker Model Runner:

docker model run hf.co/OpenLemur/lemur-70b-v1

lemur-70b-v1

![]()

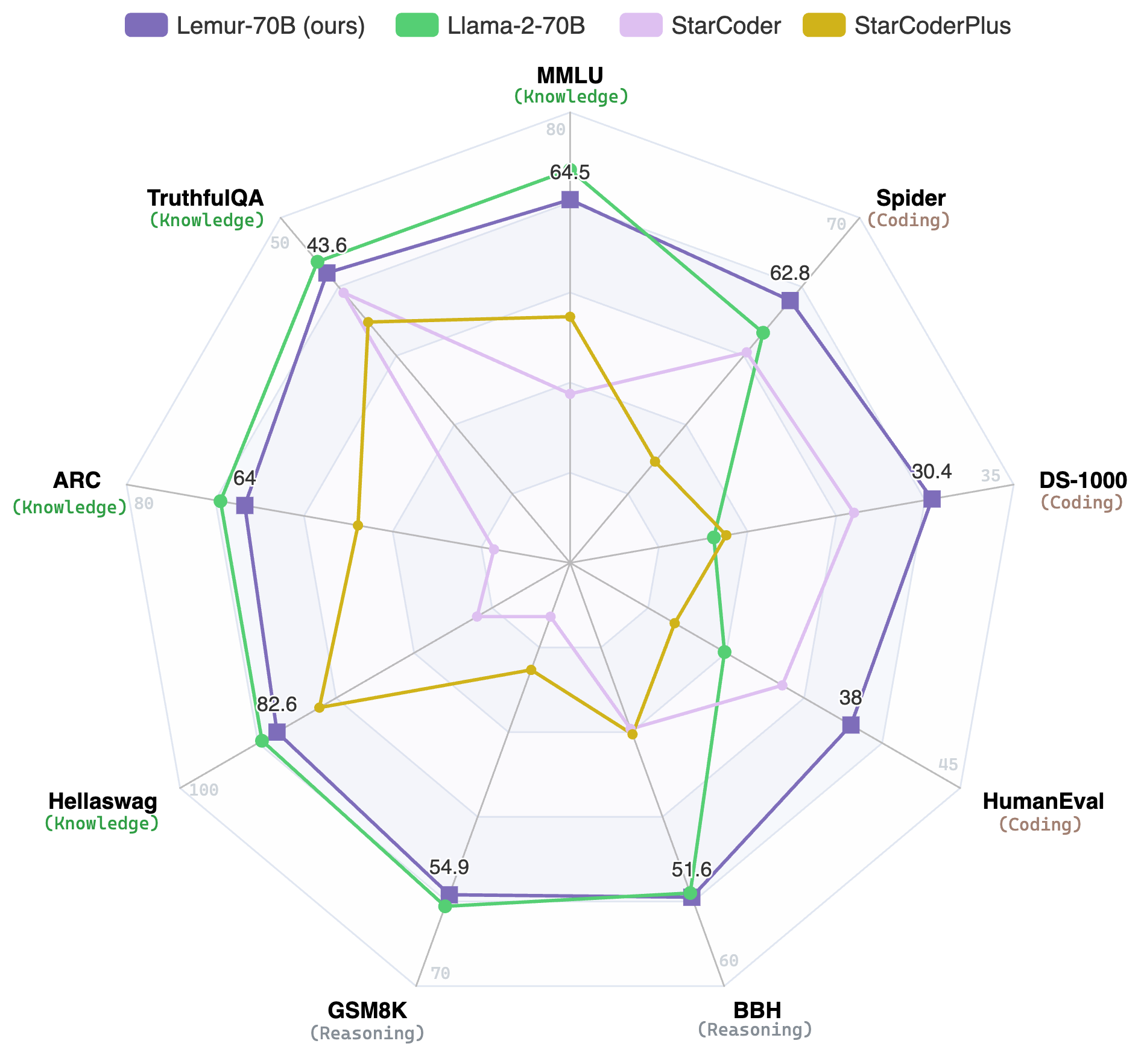

📄Paper: https://arxiv.org/abs/2310.06830

👩💻Code: https://github.com/OpenLemur/Lemur

Use

Setup

First, we have to install all the libraries listed in requirements.txt in GitHub:

pip install -r requirements.txt

Intended use

Since it is not trained on instruction following corpus, it won't respond well to questions like "What is the Python code to do quick sort?".

Generation

from transformers import AutoTokenizer, AutoModelForCausalLM

tokenizer = AutoTokenizer.from_pretrained("OpenLemur/lemur-70b-v1")

model = AutoModelForCausalLM.from_pretrained("OpenLemur/lemur-70b-v1", device_map="auto", load_in_8bit=True)

# Text Generation Example

prompt = "The world is "

input = tokenizer(prompt, return_tensors="pt")

output = model.generate(**input, max_length=50, num_return_sequences=1)

generated_text = tokenizer.decode(output[0], skip_special_tokens=True)

print(generated_text)

# Code Generation Example

prompt = """

def factorial(n):

if n == 0:

return 1

"""

input = tokenizer(prompt, return_tensors="pt")

output = model.generate(**input, max_length=200, num_return_sequences=1)

generated_code = tokenizer.decode(output[0], skip_special_tokens=True)

print(generated_code)

License

The model is licensed under the Llama-2 community license agreement.

Acknowledgements

The Lemur project is an open collaborative research effort between XLang Lab and Salesforce Research. We thank Salesforce, Google Research and Amazon AWS for their gift support.

- Downloads last month

- 1,110